COMPUTER SCIENCE

HTML5

The importance of NoSQL Databases ..

The importance of In-memory Databases ..

Data Partitioning ..

Data Partitioning is also the process of logically and/or physically partitioning data into segments that are more easily maintained or accessed. Current RDBMS systems provide this kind of distribution functionality. Partitioning of data helps in performance and utility processing.

Data Partitioning can be of great help in facilitating the efficient and effective management of highly available relational data warehouse. But data partitioning could be a complex process which has several factors that can affect partitioning strategies and design, implementation, and management considerations in a data warehousing environment.

A data warehouse which is powered by a relational database management system can provide for a comprehensive source of data and an infrastructure for building Business Intelligence (BI) solutions. Typically, an implementation of a relational data warehouse can involve creation and management of dimension tables and fact tables. A dimension table is usually smaller in size compared to a fact table but they both provide details about the attributes used to describe or explain business facts. Some examples of a dimension include item, store, and time. On the other hand, a fact table represents a business recording like item sales information for all the stores. All fact table need to be periodically updated using data which are the most recently collected from the various data sources.

Since data warehouses need to manage and handle high volumes of data updated regularly, careful long term planning is beneficial. Some of the factors to be considered for long term planning of a data warehouse include data volume, data loading window, Index maintenance window, workload characteristics, data aging strategy, archive and backup strategy and hardware characteristics

There are two approaches to implementing a relational data warehouse: monolithic approach and partitioned approach. The monolithic approach may contain huge fact tables which can be difficult to manage.

There are many benefits to implementing a relational data warehouse using the data partitioning approach. The single biggest benefit to a data partitioning approach is easy yet efficient maintenance. As an organization grows, so will the data in the database. The need for high availability of critical data while accommodating the need for a small database maintenance window becomes indispensable. Data partitioning can answer the need to small database maintenance window in a very large business organization. With data partitioning, big issues pertaining to supporting large tables can be answered by having the database decompose large chunks of data into smaller partitions thereby resulting in better management. Data partitioning also results in faster data loading, easy monitoring of aging data and efficient data retrieval system.

Data partitioning in relational data warehouse can implemented by objects partitioning of base tables, clustered and non-clustered indexes, and index views. Range partitions refer to table partitions which are defined by a customizable range of data. The end user or database administrator can define the partition function with boundary values, partition scheme having file group mappings and table which are mapped to the partition scheme.

Partition (database) - Wikipedia, the free encyclopedia

Partitioning in Postgresql

Partitioning a SQL Server Database Table

The importance of Pagination in Web

content ..

On the Internet, pagination is used for such things as displaying a limited number of results on search engine results pages, or showing a limited number of posts when viewing a forum thread. Pagination is used in some form in almost every web application to divide returned data and display it on multiple pages. Pagination also includes the logic of preparing and displaying the links to the various pages.

Pagination can be handled client-side or server-side. Server-side pagination is more common. Client-side pagination can be used when there are very few records to be accessed, in which case all records can be returned, and the client can use JavaScript to view the separate pages. By using AJAX, hybrid server/client-side pagination can be used, in which Javascript is used to request the subsequent page which is loaded and inserted into the Document Object Model via AJAX.

Server-side pagination is appropriate for large data sets providing faster initial page load, accessibility for those not running Javascript, and complex view business logic

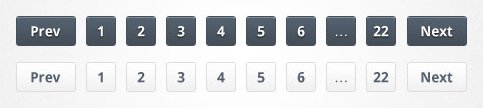

Correctly implementing pagination can be difficult. There are many different usability questions such as should "previous" and "next" links be included, how many links to pages should be displayed, and should there be a link to the first and last pages. Also ability to define the number of records displayed in a single page is useful.

The importance of Content Management System

(CMS) ..

- Allow for a large number of people to contribute to and share stored data

- Control access to data, based on user roles (defining which information users or user groups can view, edit, publish, etc.)

- Aid in easy storage and retrieval of data

- Control of data validity and compliance

- Reduce repetitive duplicate input

- Improve the ease of report writing

- Improve communication between users

WebDNA: an incredibly flexible scripting

language and database system..

WebSphere Application Server ..

A scalable application foundation that can go from single server to moderately sized departmental configurations to large-scale, dynamic web applications requiring web tier clustering and fail over across multiple application server instances.

Optimize developer productivity and web application deployment with the new

Liberty Profile option, an ultra lightweight, fast starting, highly composable

application server profile.

Improve productivity and resiliency while gaining interruption free rollout

without incurring outages to your end users with Application Edition Management

Realize higher application availability to end users with new Application

Health Management that monitors the status of your application servers and

senses and responds to problem areas before end users suffer and outage.

Improve business results by ensuring priority is given to business critical

applications with new Intelligent Routing features that prioritizes and routes

requests based upon administrator defined rules.

Handle spikes in demand and dynamically provision and start and stop new

instance of application server Java Virtual Machines (JVMs) based on workload

demands.

Gain higher quality of service by leveraging a common Java infrastructure

for OLTP and batch applications with new Enterprise Batch Workload support that

can be executed across multiple Java EE environments.

Developer tool options to match project development needs, support for Java

7,and OSGi, enhanced Migration Toolkit support, Support for the Web 2.0 and

Mobile Toolkit

A variety of pricing alternatives, including socket-based pricing